In short? Yes. Class over. Thanks for coming…

No? Ok, I suppose maybe I should explain why I feel this way. In this brief article I will attempt to explain reactive programming from a high level, ivory tower, standpoint. I’m not planning on getting into implementation details. there are many, many ways to go about it and I would not begin to presume I understand all of my readers requirements are. But I hope that by the end of this you will at least be curious enough to follow my resource links to learn more and maybe even do your own research to see how you might apply these principles in your own environment.

What is reactive programming?

Reactive programming (RP) is a set of programming patterns and techniques that were designed to handle the new landscape of computing. Some examples of what I mean by “new landscape”:

Changing requirements (Chart courtesy of Martin Odersky)

| Requirement | ~10 years ago | Today (2014/2015) |

| Server nodes | 10’s | 1000’s |

| Response times | seconds | milliseconds |

| Acceptable downtime | hours | 0 |

| Data volume | GBs | TBs -> PBs |

These new requirements are not simply incremental changes. They represent a complete paradigm shift. To me, the key points here are “Response times” and “Acceptable downtime”. These drive everything else. The increase in data is a direct response to customers wanting better experiences. However, it is also due to much-more finegrained monitoring and statistics. Regardless, the above is a reality. And, if you aren’t dealing with this now, it is guaranteed you will be.

The new architectures that began to evolve from these new requirements needed to have the following characteristics:

- Event -driven – produce, propagate, consume and react to messages.

- Scalable – The ability to react to changing amount of load. Preferably without downtime.

- Resilient – Can react to failures without impacting client experience.

- Responsive – Will react to users promptly. (a.k.a. no progress bars!)

All of these characteristics must be addressed to present a truly reactive architecture. In reality, I believe that most implementations will represent a subset of the above. However, these subsets, while functionally achieving the end-goal of RP, would not be RP Certified (if such a thing really existed.) Whether this matter to you is along the same lines as the debates amongst REST practitioners. I’m not going to step into that ring.

Event-Driven Architecture

One common approach to meeting these requirements is an event-driven architecture (EDA). If you are not familiar with the concept, I suggest checking-out one of the resource links at the bottom. However, in short, EDA is an architecture where the system is designed to react to events sent from other parts of the system, asynchronously and without blocking. An event, strictly speaking, is a notification that some state has changed in some part of the system. For example, if you are creating a system for a library and someone decides to check-out a book, an event will be ‘raised’ that any part of the system may choose to consume. In this scenario, one system that would absolutely be interested would be the one responsible for keeping-track of the libraries inventory. When it receives the checked-out event, it will change the inventory to reflect that the book is no longer available to be borrowed. There would likely be many more systems interested in this event as well.

By providing an event-driven architecture in this manner, you allow for a system comprised of highly-decoupled, composable parts. In practice, these parts are typically services, preferably lightweight with a single responsibility. (Often referred to as microservices these days.)

So, how does this all fit into creating a “reactive” system that is scalable, resilient, and responsive? Well, let me address each of these individually.

Scalable

Event-driven architectures (EDAs) provide scalability via their highly-decoupled nature. By decomposing a system into well-defined pieces with clear process boundaries, you can scale a systems components independently of each other. So, for example, given the library example, you may find a bottleneck existing at the point the books are checked-out just prior to the final paper due dates at the local university. (Not that I would have ever been one of these students! 😉 ) In anticipation of this increase, you could allocate additional nodes that simply emit the checked-out messages. (I realize that you may, in-turn, need to scale-out the consumers of the check-out message.)

Because of the level of decoupling that EDA provides, the location of producers or consumers of events is completely irrelevant. This allows for additional scale-out opportunities such as cross-dc (for self-hosted solutions) or cross-az/region (for cloud-hosted solutions) scaling. This leads to many different options that reinforce, not only scalability, but resiliency and responsiveness as well. We will discuss these independently.

Resilient

The scalability options described above provide a significant start on the path to resiliency. By being able to scale-out in this manner you are making your system more resilient to individual node failures, network failures and even the rare az/region outage.

However, the resiliency of an FP architecture, when designed properly, goes well beyond this. One foundational aspect is to build-in the ability to supervise processes, detect their failure and restart them. In order to do this properly, you need to understand the actor model and supervision trees.

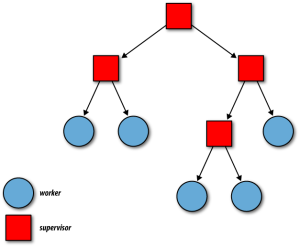

Courtesy Erlang Programming (Cesarini, Thompson 2009)

Thinking in actors and supervisors will be a bit a mental shift for most engineers and architects familiar with traditional OO models. However, spending the time to comprehend them will pay-off in spades. they are incredibly valuable in building resilient and scalable systems. I won’t go into much detail on this subject. However, at a high level, supervision trees allow you to detect failures in workers and restart them if they fail or block unpredictably. In addition, actors facilitate concurrency by encapsulating small units of logic without any shared state (preferably).

This model also has the advantage of allowing scaling and resiliency across service boundaries. Meaning that if one service goes down, the entire service will not. As an example, if twitter loses the system that makes suggestions for similar feeds, twitter will stay-up while that piece is repaired. From a client perspective this can make the difference between a pleasurable experience and one that makes you leave for good.

Responsive

Reactive systems are kept responsive by their inherently asynchronous nature. Rather than firing a request and waiting for a response for a server, you make a request (or many) and continue on your merry way. As responses are received you process them in-line and, if a UI is part of the mix, you update it accordingly. An example of this would be when you view a twitter feed and posts may not all show-up at once. In fact, they often do not appear in the order they were created due to the fact that twitter shards their tweets. This means that the tweets you are reading may be getting retrieved from databases distributed across the globe.

By adding EDA into the mix, you have the ability to have state events occurring update a system dynamically. So, using the twitter example again, as a tweet is created by someone you follow, it will be propagated to the feed that you are viewing without you needing to request the page again.

In addition, as mentioned previously, the ability to scale-out across regions promotes the ability to locate services in a geographically dispersed manner. By doing so, you can implement latency-based routing to route requests to the nearest available node.

So… Why All the hubbub?

Well, if you haven’t determined it thus far, RP is a natural reaction to the world of cloud computing. Cloud computing requires a brand new way fo thinking of systems architecture. While it obviously provides a wealth of opportunities that were never available prior to its existence, it also introduces an equal amount of new challenges. Reactive Programming architectures address many of those challenges in a new, elegant manner.

RP is easiest to build into an architecture from the beginning. However, I can say from experience, it is not all that difficult to retroactively adapt an existing system to, at least functionally, fit into the reactive paradigm. Either way, RP is becoming a rage because these problems are real. It is a necessity for meeting customer expectations in the modern world of computing to solve these problems. If you are not currently thinking reactively or working toward solving these problems in some other way, you should be. RP is a good, quickly maturing, model to follow to that end.

Resources

Event-Driven Architecture

Using Events in Highly Distributed Architectures

Scalability

Nice (simple) Breakdown of to Scale

Reactive Programming

What Does Reactive Mean? (Erik Meijer)

Implementing

One thought on “Is Reactive Programming more than just hype?”